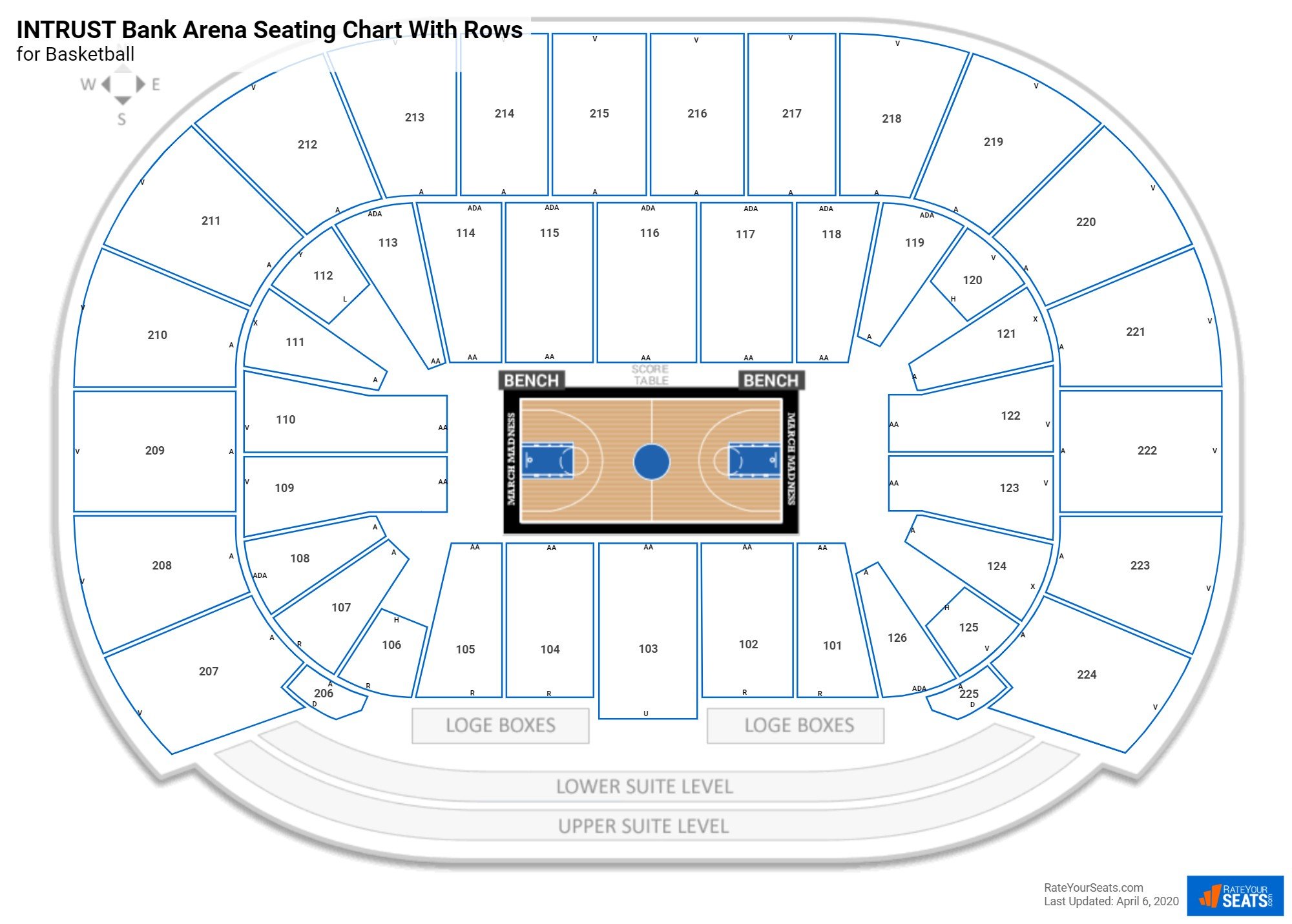

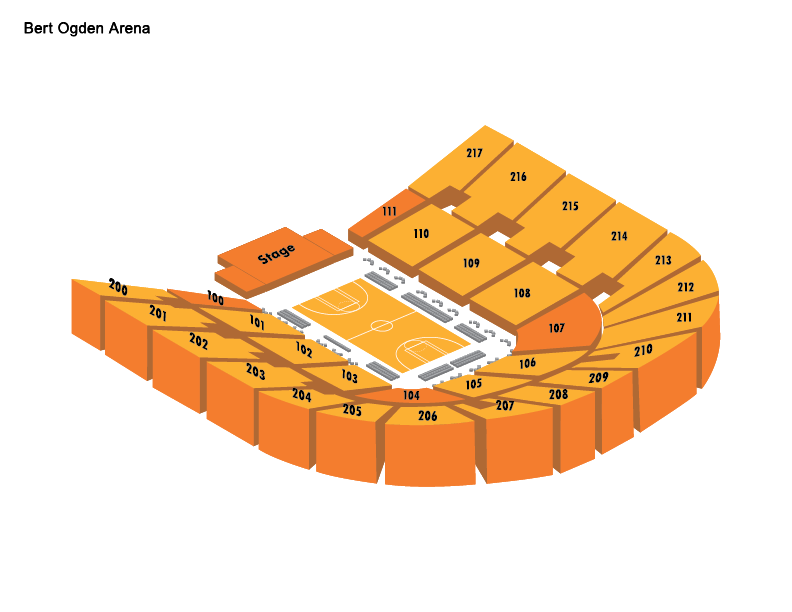

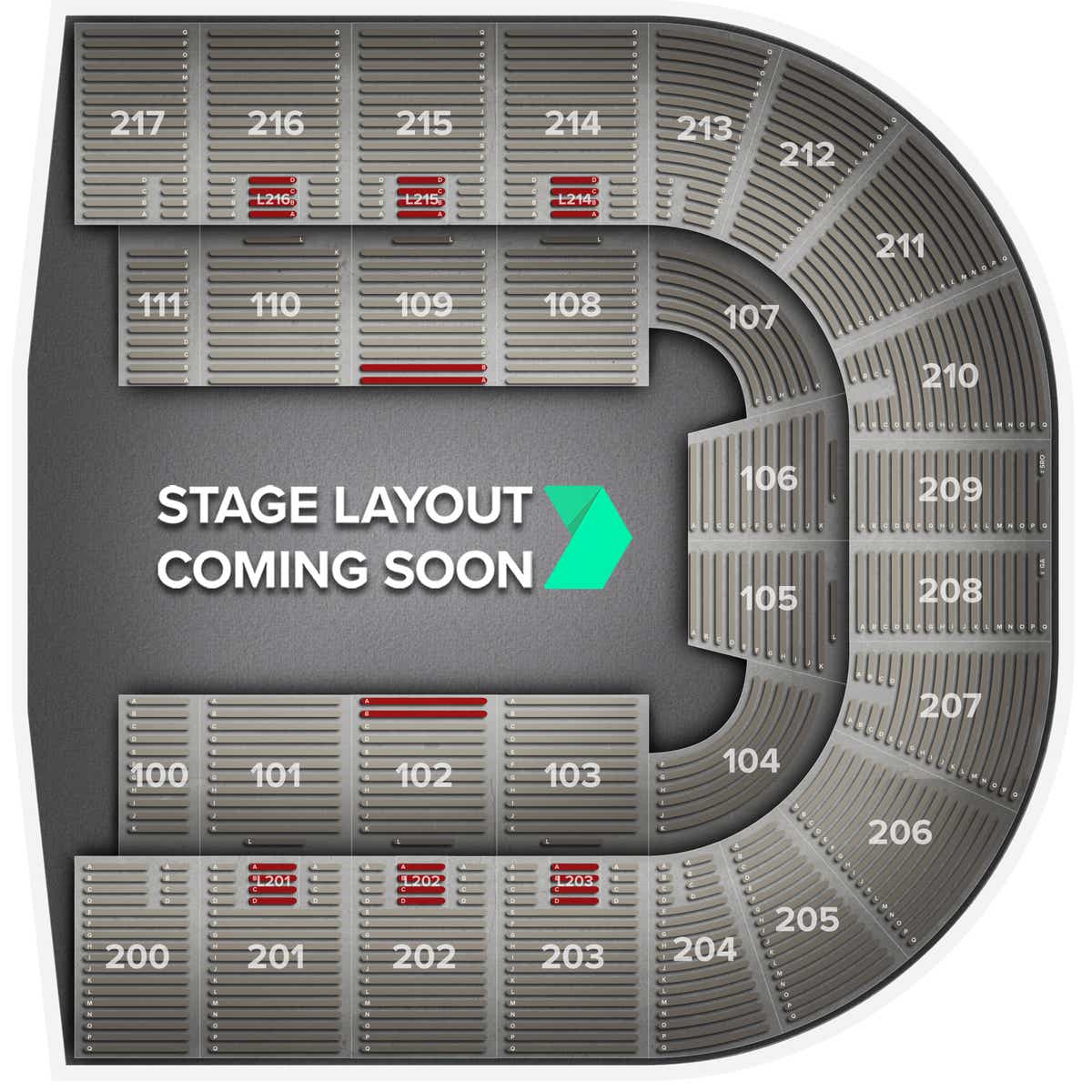

Bert Ogden Arena Seating Chart

Bert Ogden Arena Seating Chart - Bert is a bidirectional transformer pretrained on unlabeled text to predict masked tokens in a sentence and to predict whether one sentence follows another. [1][2] it learns to represent text as a sequence of. We introduce a new language representation model called bert, which stands for bidirectional encoder representations from transformers. In the following, we’ll explore bert models from the ground up — understanding what they are, how they work, and most importantly, how to use them practically in your projects. Bert is an open source machine learning framework for natural language processing (nlp) that helps computers understand ambiguous language by using context. Bert is designed to help computers understand the meaning of. Instead of reading sentences in just one direction, it reads them both ways, making sense of context. The main idea is that by. Bert language model is an open source machine learning framework for natural language processing (nlp). Bidirectional encoder representations from transformers (bert) is a language model introduced in october 2018 by researchers at google. Bert language model is an open source machine learning framework for natural language processing (nlp). Bert is a bidirectional transformer pretrained on unlabeled text to predict masked tokens in a sentence and to predict whether one sentence follows another. Bidirectional encoder representations from transformers (bert) is a language model introduced in october 2018 by researchers at google. In the following, we’ll explore bert models from the ground up — understanding what they are, how they work, and most importantly, how to use them practically in your projects. Bert is an open source machine learning framework for natural language processing (nlp) that helps computers understand ambiguous language by using context. We introduce a new language representation model called bert, which stands for bidirectional encoder representations from transformers. The main idea is that by. Bert is designed to help computers understand the meaning of. [1][2] it learns to represent text as a sequence of. Instead of reading sentences in just one direction, it reads them both ways, making sense of context. The main idea is that by. Bert is designed to help computers understand the meaning of. Bert language model is an open source machine learning framework for natural language processing (nlp). Bidirectional encoder representations from transformers (bert) is a language model introduced in october 2018 by researchers at google. We introduce a new language representation model called bert, which stands. We introduce a new language representation model called bert, which stands for bidirectional encoder representations from transformers. Instead of reading sentences in just one direction, it reads them both ways, making sense of context. Bert is a bidirectional transformer pretrained on unlabeled text to predict masked tokens in a sentence and to predict whether one sentence follows another. Bert language. Bert is a bidirectional transformer pretrained on unlabeled text to predict masked tokens in a sentence and to predict whether one sentence follows another. Bidirectional encoder representations from transformers (bert) is a language model introduced in october 2018 by researchers at google. Bert is designed to help computers understand the meaning of. Bert is an open source machine learning framework. Bert is a bidirectional transformer pretrained on unlabeled text to predict masked tokens in a sentence and to predict whether one sentence follows another. [1][2] it learns to represent text as a sequence of. Instead of reading sentences in just one direction, it reads them both ways, making sense of context. Bidirectional encoder representations from transformers (bert) is a language. Bert language model is an open source machine learning framework for natural language processing (nlp). [1][2] it learns to represent text as a sequence of. We introduce a new language representation model called bert, which stands for bidirectional encoder representations from transformers. Bert is an open source machine learning framework for natural language processing (nlp) that helps computers understand ambiguous. Bert is an open source machine learning framework for natural language processing (nlp) that helps computers understand ambiguous language by using context. We introduce a new language representation model called bert, which stands for bidirectional encoder representations from transformers. Bert is designed to help computers understand the meaning of. Bert is a bidirectional transformer pretrained on unlabeled text to predict. In the following, we’ll explore bert models from the ground up — understanding what they are, how they work, and most importantly, how to use them practically in your projects. Bert is a bidirectional transformer pretrained on unlabeled text to predict masked tokens in a sentence and to predict whether one sentence follows another. Bert language model is an open. In the following, we’ll explore bert models from the ground up — understanding what they are, how they work, and most importantly, how to use them practically in your projects. [1][2] it learns to represent text as a sequence of. Bert is a bidirectional transformer pretrained on unlabeled text to predict masked tokens in a sentence and to predict whether. Instead of reading sentences in just one direction, it reads them both ways, making sense of context. [1][2] it learns to represent text as a sequence of. Bert is a bidirectional transformer pretrained on unlabeled text to predict masked tokens in a sentence and to predict whether one sentence follows another. Bert is designed to help computers understand the meaning. Bert is designed to help computers understand the meaning of. Bidirectional encoder representations from transformers (bert) is a language model introduced in october 2018 by researchers at google. Bert is a bidirectional transformer pretrained on unlabeled text to predict masked tokens in a sentence and to predict whether one sentence follows another. We introduce a new language representation model called. Bert is a bidirectional transformer pretrained on unlabeled text to predict masked tokens in a sentence and to predict whether one sentence follows another. Bidirectional encoder representations from transformers (bert) is a language model introduced in october 2018 by researchers at google. Bert is an open source machine learning framework for natural language processing (nlp) that helps computers understand ambiguous language by using context. The main idea is that by. Instead of reading sentences in just one direction, it reads them both ways, making sense of context. [1][2] it learns to represent text as a sequence of. Bert language model is an open source machine learning framework for natural language processing (nlp). Bert is designed to help computers understand the meaning of.Bert Ogden Arena Seating Chart Behance

Arena Map Bert Ogden Arena

General Assembly Communication Info Edinburg Consolidated Independent School District

Bert Ogden Arena Seating Chart Portal.posgradount.edu.pe

Bert Ogden Arena Seating Chart For Basketball

Bert Ogden Arena Tickets, Seating Charts and Schedule in Edinburg TX at StubPass!

Bert Ogden Arena Seating Chart Behance

Bert Ogden Arena Seating Chart

Bert Ogden Arena Seating Chart

Bert Ogden Arena Tickets & Events Gametime

We Introduce A New Language Representation Model Called Bert, Which Stands For Bidirectional Encoder Representations From Transformers.

In The Following, We’ll Explore Bert Models From The Ground Up — Understanding What They Are, How They Work, And Most Importantly, How To Use Them Practically In Your Projects.

Related Post: